Can AI Handle Accessibility for Me?

Can AI tools create accessible components for me already? YES or NO?

Here are my 2 cents on the issue :)

AI and accessible code

Is it true that AI models are biased toward inaccessible code?There might be a fundamental problem with how AI models learn to write code: these models are trained on a huge pool of public code and web content — and most of that code is not very accessible:

The 2025 WebAIM Million report analyzes the home pages of the top 1,000,000 websites for automatically detectable Web Content Accessibility Guidelines (WCAG) failures. In 2025, 94.8% of home pages had detected WCAG failures.

The actual number of issues across the web is likely to be higher because automated scans only catch a subset of WCAG criteria. If the majority of the web is not accessible — AI by default can reproduce exactly the same a11y issues. The old principle still holds: garbage in, garbage out — just at a much larger scale.

Until the quality of code across the web improves — or developers patiently teach their AI tools using verified accessible components and resources — this problem is likely to stay with us.

AI coding assistants

Own experienceBy default, AI coding assistants (GitHub Copilot, OpenAI Codex, Claude Code, Cursor etc.) can help generate code that follows basic accessibility principles for simple or typical UI blocks. But based on my experience, they can also introduce a11y issues.

Some examples I noticed just recently:

- Adding

aria-labelto elements that don’t support it - Overusing ARIA roles

- Creating links and buttons with meaningless labels

- Illegally wrapping product/post cards in

<a>tags - Choosing colors that don’t meet contrast requirements

- Poor keyboard navigation and focus management for more complex components (like dropdowns, carousels, menus etc.)

- Assistive technology announcements are often missing or incorrect (e.g., no live region updates, or wrong ARIA attributes for dynamic content)

Saying just “make this component accessible” during development helps a bit (especially when you ask to ultrathink :)), but is not enough — unfortunately you need to specify in eeevery detail what you want.

The 2025 WebAIM Million report also highlights increased ARIA usage on pages with higher detected errors. This can suggest that some developers (and likely AI models) are using ARIA as a band-aid for underlying accessibility issues, which can lead to more repetitive problems.

AI tools and models are progressing very fast — about a year ago I saw AI-generated code that regularly contained inaccessible patterns and ARIA attributes that were not just incorrect but did not even exist (!) in the spec. Today, with the latest models, the situation is noticeably better — the baseline quality of generated HTML has improved.

But even “noticeably better” is not “accessible.” I still have to review every component carefully and provide specific instructions to fix issues with semantic HTML, ARIA, keyboard interaction, focus management, and more. If I did not already know what to look for, those issues would have shipped unnoticed.

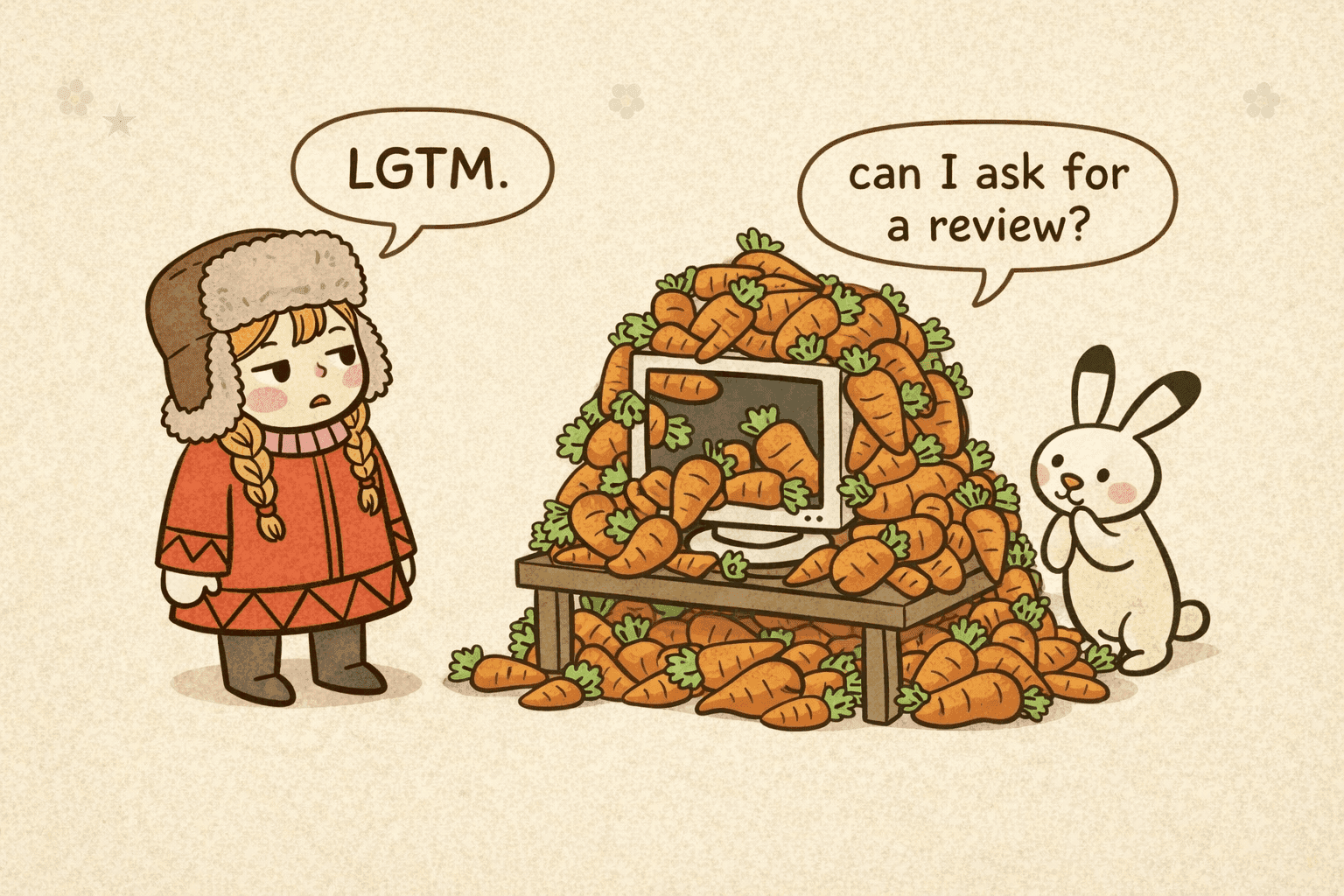

My overall feeling is that most of the time the code looks right, but the experience is sometimes wrong. When AI generates code that looks complete and correct, it is easy to skip the review — especially if you don’t know what to check for.

As more teams adopt AI coding assistants, a new bottleneck is becoming visible: code creation is no longer the most time-consuming part, but code review is. When code is produced faster than it can be thoroughly reviewed, accessibility is one of the first things that might get missed.

Even Anthropic’s engineering blog has some obvious accessibility issues with keyboard navigation, contrast, and valid HTML. I’m sure some of the best engineers work there, which only proves the point: accessibility is very easy to overlook.

AI-powered accessibility overlays

Own experience + researchAI-powered accessibility overlays — widgets that claim they can “fix” a website automatically — may also give you a dangerous overconfidence in the state of your solution.

A lot has been written about this. Here are some of the examples I found most convincing:

- A peer-reviewed study (ACM ASSETS 2024) surveyed 47 blind and low-vision users. Most reported that overlays made accessibility problems worse — overlay screen readers conflicted with their own assistive technology, and users opted to avoid websites with overlays entirely

- Overlays joint statement by the European Disability Forum and International Association of Accessibility Professionals (IAAP) stating that overlays “do not make the website accessible or compliant with European accessibility legislation”

- Regulators have acted too — in 2025 the FTC required accessiBe to pay $1 million over deceptive claims that its AI-powered product could make websites WCAG-compliant

No overlay can reliably transform non-semantic source code into the semantic structure and interaction model assistive technologies depend on.

In my experience, for web pages with minor visual issues (like text sizing, color, contrast etc.) an overlay might adjust things well enough — but users can already make the same adjustments through their browser or OS settings. For more complex pages, overlays can interfere with JavaScript behavior, break interactions, and make the experience worse than without them (not to mention the performance issues they can cause).

As a developer, I’d prefer to fix the underlying code. As a user, I’d prefer a website that is accessible from the start — not one that relies on a band-aid I have to work around.

Where does AI help the most?

Own experience, scoped to accessibilityI hope I didn’t come across as too negative about AI tools. Quite the opposite — I’m genuinely optimistic about where they’re headed, and I have to admit I enjoy a good vibe-coding session way more than I probably should :D

As for me, the biggest benefit right now is that it can significantly speed up the implementation of logic and well-established UI and UX patterns, leaving time to focus on more complex user experience problems.

In the scope of a11y, AI is also helpful for:

- Drafting alt text — AI can describe what is in an image, but you still need to decide what the purpose of the image is in context (is it decorative? informative? functional?)

- Explaining WCAG criteria — AI is good at translating spec language into practical guidance

- Generating test configurations — CI setups, linter configs, and testing scripts (like the ones in Automate Accessibility Checks in CI)

- Generating test cases — unit and integration tests for accessibility scenarios like keyboard navigation, focus trapping, and ARIA state changes

- Finding relevant resources — documentation, examples, and tools for specific accessibility issues

- Brainstorming solutions — it can suggest approaches, but you still need to evaluate and test them

- Checking against provided checklists — you can ask it to review code against a specific WCAG criterion, but you still need to verify the results

It’s important to treat AI output as a starting point, not a final answer.

So, can AI handle accessibility for me?

My answer is…

Sometimes. But not without human verification (yet).

At this moment, no tool (that I know about) can answer the question that actually matters: does this work for a real person using a screen reader, a keyboard, or a switch device? That still requires keyboard testing, testing with assistive technologies, and ideally, feedback from people with disabilities.

What we can do with it now:

-

Learn the fundamentals. Understand accessibility principles and best practices so you can review AI-generated code and make informed decisions about what to request, accept, modify, or reject.

-

Tune your AI instructions. It’s worth investing time in tuning the project-level AI instructions and rules (e.g., AGENTS.md, SKILL.md) — the more specific and detailed your instructions, the better the output will be. It’s quite a task — perfect for the pickiest or should I say, detail-obsessed and experienced person on the team. You can also try out skills created by other contributors and adapt them to your project.

-

Invest in automation. A few years ago, a mid-sized team could get by without a strong automation pipeline. Now that AI can produce code faster than anyone can review it, automated checks — linters, CI, accessibility testing — are a safety net you can’t skip.

All of these take effort and slow you down at first.

That’s why the question: are we moving faster than our ability to keep up with accessibility or should I say, quality? (rhetorical question)

See also:

- Run Automated Accessibility Tools — browser extensions and manual tools

- Automate Accessibility Checks in CI — set up automated validation in your pipeline

- Practical accessibility testing — the full testing process

- When code stops being the bottleneck by Kelly Vaughn — on the shift from code creation to code review as the new bottleneck